Product Page Optimization (PPO): App Store A/B Testing Guide

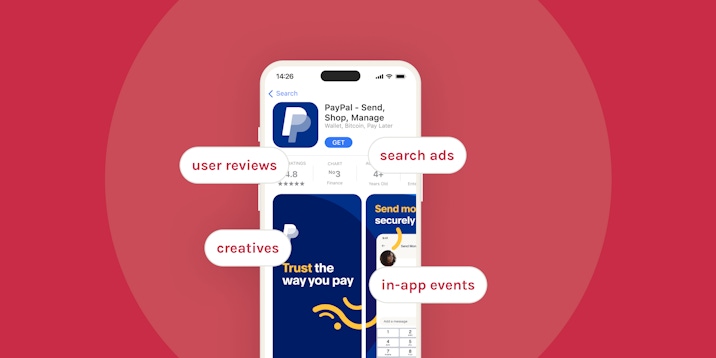

Creative A/B testing allows app developers to test different aspects of an app’s metadata and uncover the most effective asset for conversion rate optimization. Since its launch with iOS 15, Apple has provided app developers with a native A/B testing tool called product page optimization (PPO), giving you the opportunity to test up to 3 variants of an app page on live App Store traffic.

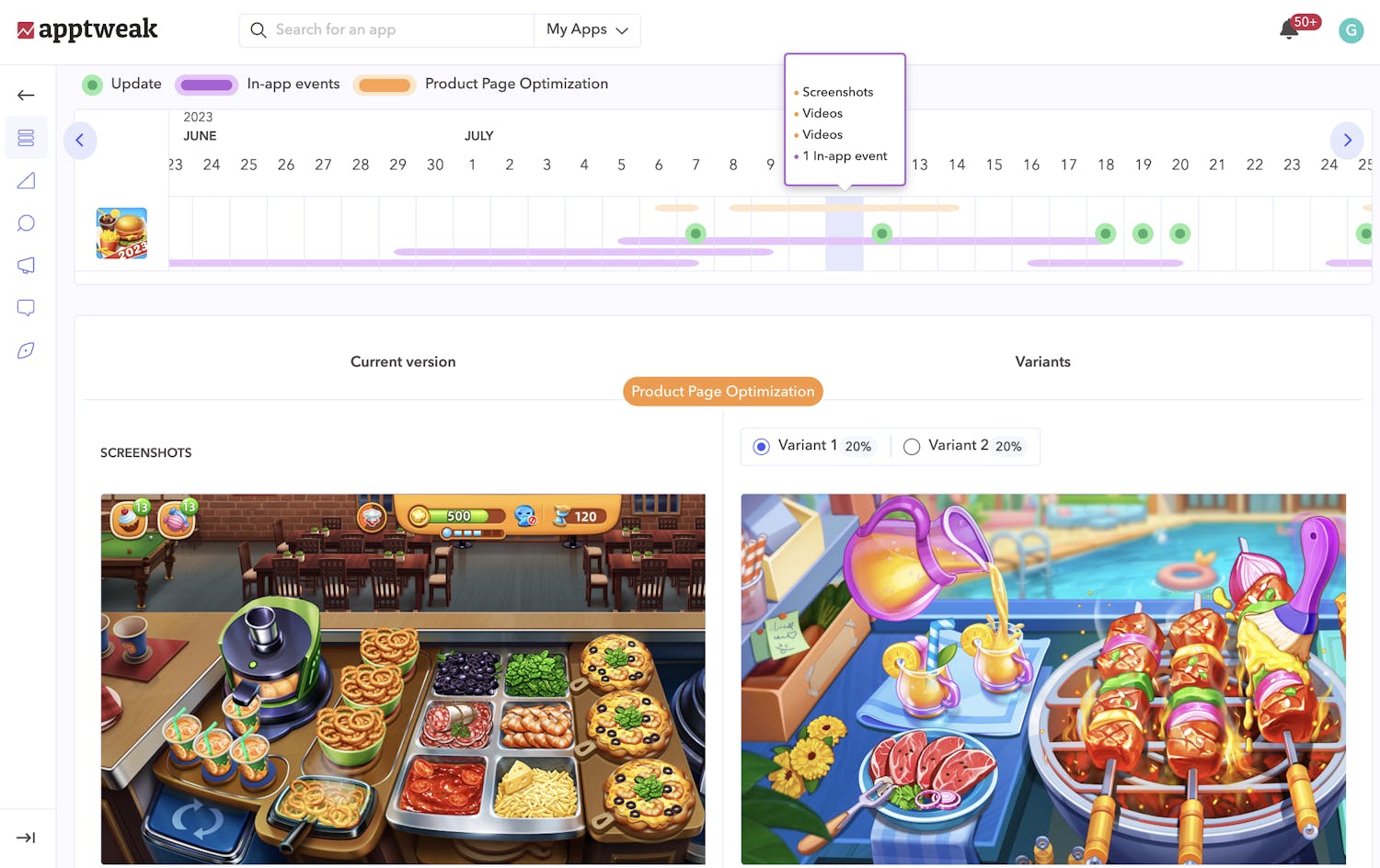

In AppTweak’s ASO Timeline, monitor and visualize PPO tests for any app or game on the App Store! See the creative elements your competitors are A/B testing and get powerful insights to increase your competitive advantage (also available for A/B tests on Google Play).

In this blog, we’ll look into how product page optimization works and explain different strategies to help you make the most of PPO.

What is product page optimization (PPO)?

Product page optimization is an iOS feature that allows you to optimize your store assets across the App Store, providing a new way to optimize your conversion funnel on the App Store. It offers an opportunity to experiment with different variants of your product page assets and find out which ones are the most effective when it comes to encouraging store visitors to download your app/game.

For example, the results of your PPO tests can provide insights into:

- The features or value propositions that resonate best with store visitors.

- The best content to localize to help drive conversions in different markets.

- The impact of seasonally relevant content in creatives.

- And more.

Expert Tip

Since product page optimization was released with iOS 15, only store visitors with iOS 15 or later installed on their device will see your A/B tests.Why is product page optimization important for ASO?

App marketers and developers have hailed product page optimization as a game-changer for ASO. Native to the App Store, this A/B testing feature helps optimize your app’s conversion rate and stay ahead of the competition. It is a powerful tool to understand how creative changes affect the decision-making process of store visitors and, in turn, can help you create your winning creative set.

Which assets can you test with PPO?

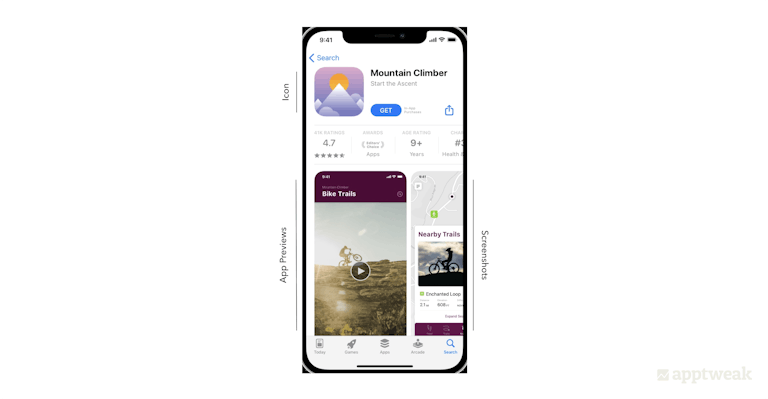

With PPO, you can test 3 different app creatives on the App Store – screenshots, app icons, and app preview videos – and compare up to 3 different product page versions against the original.

Here are some things to keep in mind before configuring your PPO tests:

- You can select the percentage of users to whom the treatments will be randomly shown, and also choose the traffic allocation for the 3 different versions.

- Tests can run for up to 90 days.

- You may also localize the test in different languages, but you can only do so for languages for which you have created a local app page.

- Finally, you will need to submit the product page test assets for review, independent from the app binary or app build. When A/B testing app icons, you have to submit the different versions in the app binary and go through the standard review process for your app build.

Expert Tip

Since the release of iOS 17, ongoing product page optimization tests are no longer interrupted when a new app version is published via App Store Connect. Learn more about iOS 17 announcements in this blog.PPO vs. store listing experiments

Some of the ways PPO differs from Google Play’s store listings experiments are as follows:

- One major difference is that Apple only allows creative assets to be tested. On Google Play, you can also A/B test short and long descriptions.

- Product page optimization requires you to submit your creative tests for review by Apple. Google experiments allow you to set up your tests anytime.

- Furthermore, you do not have to release a new app version each time you run a test on your Android icon. On the other hand, PPO icon experiments require you to include your icon in the binary of your new app build.

- Finally, the maximum duration of PPO tests is 90 days. A/B tests on Google Play can run for an unlimited period.

Strategies to test different assets with PPO

Before starting to create product page tests with PPO, think through what you want to test and why. For some, revamping screenshots will be the main priority; for others, whether to add an app preview video is the principal concern. Assess which elements of your brand or product matter most to your current users and how you can make your app stand out.

Consider these best practices when testing different assets of your product page with PPO:

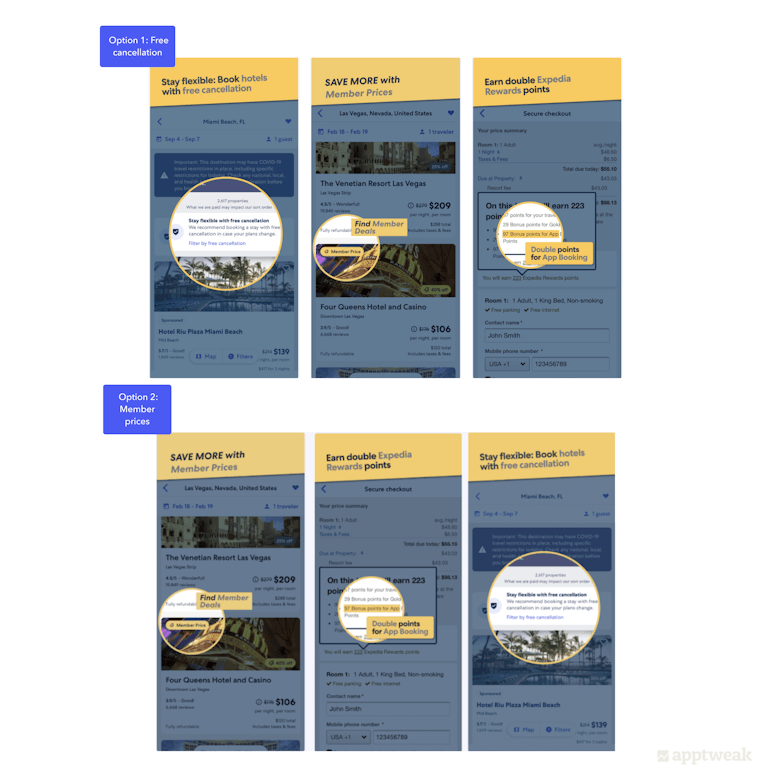

Test by features

Test different elements to identify the most appealing features, value propositions, and engaging visuals of your app. Does highlighting the value proposition on screenshots lead to an increased conversion rate? Or does showcasing an existing feature bring more downloads?

For example, the travel app Expedia could experiment with which value proposition to show on the first screenshot – either the option to book with a free cancellation or the benefits of becoming a member. The test results would then help Expedia choose the creative that resonates with users the most and persuades more people to download the app.

Test by theme

Testing seasonal content to find out whether updating your app icon or other assets to reflect seasonality helps increase your app conversion rate.

For instance, it is a good idea for shopping apps to experiment with creatives promoting deals and offers during holiday seasons and festivities to see which images convince users to download their app. Games can reflect the holiday season by testing different holiday-themed challenges or the seasons, such as snow or Halloween.

Expert Tip

If you want to test creatives for limited-time events, do it at the beginning rather than at the end of the event. This way, if the PPO test is successful, you can apply it early enough so it will run through the majority of the event and have the biggest impact.Test by new content or characters

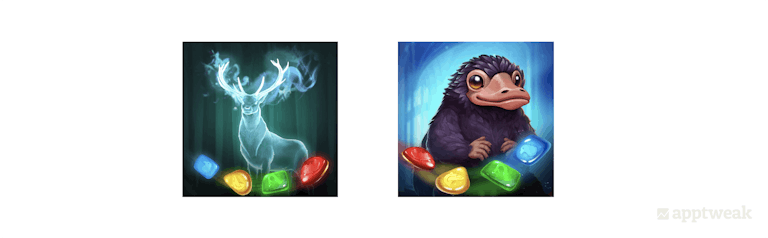

Testing to understand whether displaying new content leads to more engagement than regular content, or highlighting culturally relevant content leads to increased downloads in a specific location.

For instance, the game Harry Potter: Puzzles & Spells could run tests on its icon and include different Harry Potter characters to identify which one resonates best with its audience.

Test by creatives

This can include testing color variations of a screenshot background to find out which variation performs the best, or if adding an app preview video results in improved conversion rates.

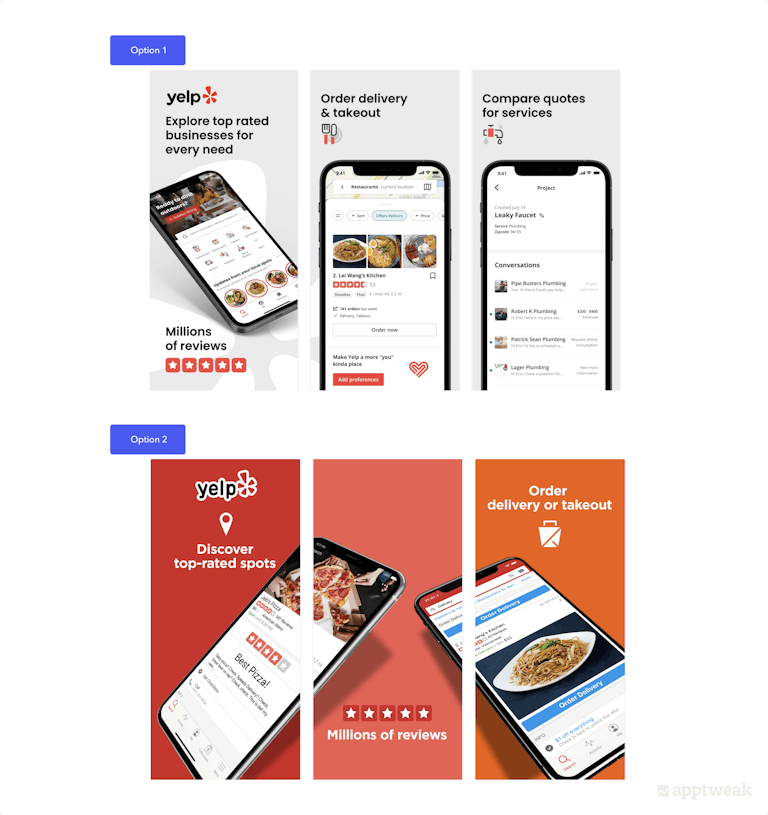

For instance, the food & drink app Yelp recently revamped the design of its screenshots. Now, with product page optimization, the app could have actually run an A/B test to better understand the conversion uplift before implementing.

How to see your competitors’ App Store A/B tests (PPO)

You can use AppTweak to easily monitor and visualize PPO tests for any app or game on the App Store:

Track your competitors’ PPO, A/B tests, in-app events, metadata updates, and more in the Timeline

In the Timeline, orange lines indicate any PPO tests detected for your app or your competitors. These insights come directly from Apple, allowing you to visualize the element tested (icon, screenshots, or preview video) and the real traffic distribution allocated to each variant.

With PPO data in AppTweak, get valuable insights into your competitors’ mobile marketing strategies, uncover the creative elements they’re testing, and easily identify the elements that resonate best with your market.

Key factors to consider before A/B testing on the App Store

To get the most out of your product page optimization (PPO) efforts, you should keep these factors in mind:

Traffic proportion

This is the percentage of visitors that will randomly view a treatment version of your product page assets. You can choose the amount of traffic you want to send to your original product page vs alternate versions. For example, if you allocate 50% of your traffic to the test, the remaining 50% will be directed to the original product page. The 50% allocated to PPO will be distributed between each treatment (if you have two treatments, each one will get 25% entire traffic).

Expert Tip

We recommend administering each of your variants to an equal number of store visitors (e.g. 50/50 or 33/33/33) to compare apples with apples.Hypothesis

Running A/B tests for the sake of doing it is bound to fail. Instead, you want to run A/B tests when you have a hypothesis in mind that you’re uncertain about and would like to test. A hypothesis will usually be formulated as follows: “If I [cause], then [effect], because [rationale].” For example, “If I change my icon to a pizza, my conversion rate will increase by 5%, because most of my users order pizza.” Try to be as specific as possible to have a solid hypothesis.

Learn the ins and outs of A/B testing in this simple guide

Assets and treatments

Think through the product page asset you want to include in the test and the number of versions you will create. We recommend testing one asset at a time to avoid a cluttered result.

Localization

Ask yourself which localizations are worth including in your test. For instance, if you want to perform bold A/B tests for your English app page but have to adhere to strict brand guidelines and don’t want to expose your main audience to the test, you can carry out the A/B test instead in Australia or New Zealand, which have much smaller audiences than the USA. You should also keep in mind that when adding more than one locale to your test, results will be presented for all locations at once and not show whether a variant performed better in one locale and not another.

Test duration

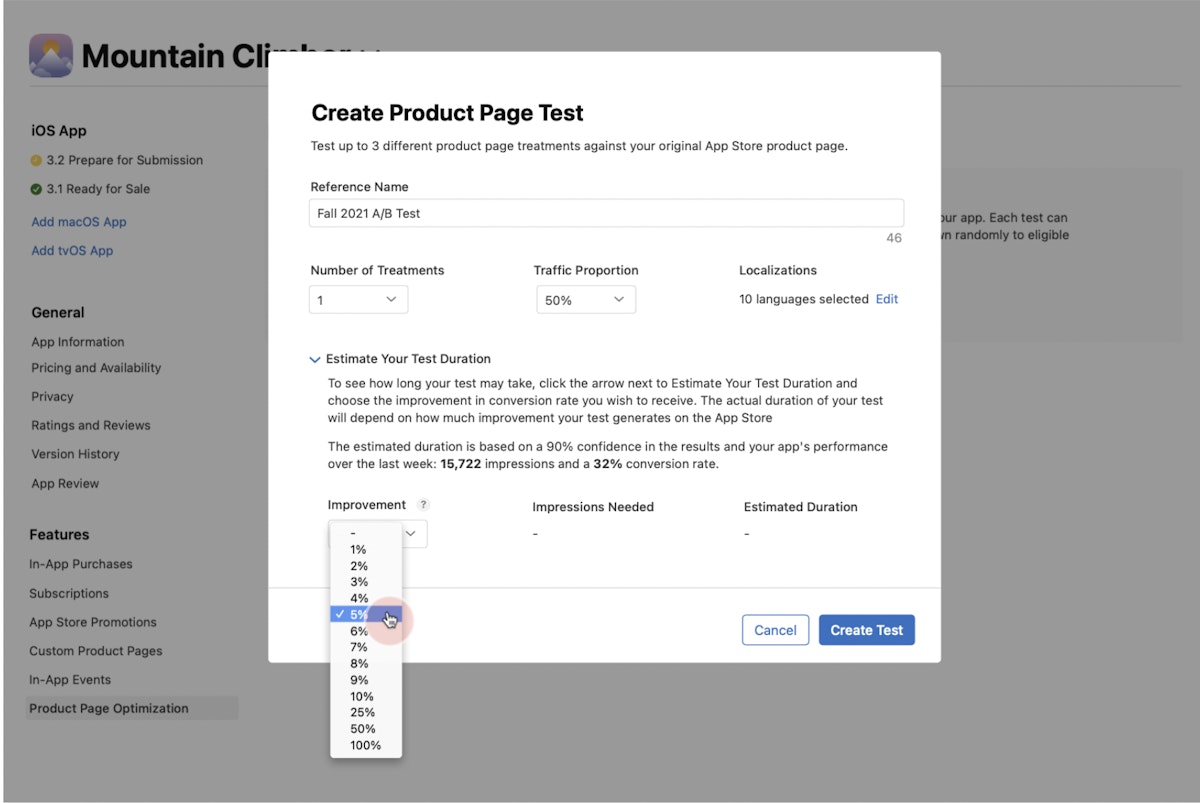

Consider the duration of your test. When launching your A/B test on the App Store, the tool provides a time estimate on the basis of the performance of your app product page, setting a target of 90% confidence. You need to choose your desired improvement value to get the estimate. The test should run until a considerable size of your potential users has visited all of the versions tested. However, tests cannot run longer than 90 days.

Challenges of A/B testing with product page optimization

A/B testing on the App Store has not yet lived up to many developers’ expectations. Instead, App Store developers have seemed to face a number of challenges:

- Product page optimization variants are only shown to App Store users with iOS 15 or later. Users coming from external channels or older versions will see the default product page.

- Before testing, all of the test treatments (icons, screenshots, and app previews) must be reviewed by the App Store, and this can take up to 24 hours. App icons need to be included in the app binary of your next version update.

- PPO will extend through the entire customer journey. Since the icon variants are in the app binary, the variant users see in the store will be the same one they see on their phone when the app has been downloaded.

- If you run a test for multiple locales, you won’t be able to distinguish between them in reporting. So, if you want to better understand test results per locale, you will have to create a different test per locale. Since you can only run one test at one time, you will have to do this sequentially, which can take a very long time.

- Tests show really low confidence intervals (90%) that make it difficult to reach any conclusion about the test and decide whether to implement one of the versions tested. A confidence interval of 90% means there’s one chance out of 10 that the results PPO shows you are wrong: the positive result you saw after running your test might turn into a conversion rate decrease when you apply your test, because you saw a “fake positive.”

- Variants you test must be unique, which means you won’t be able to run A/B/B tests.

How to set up your first PPO test

We have compiled a comprehensive checklist to help you smoothly run your first iOS 15 product page optimization test:

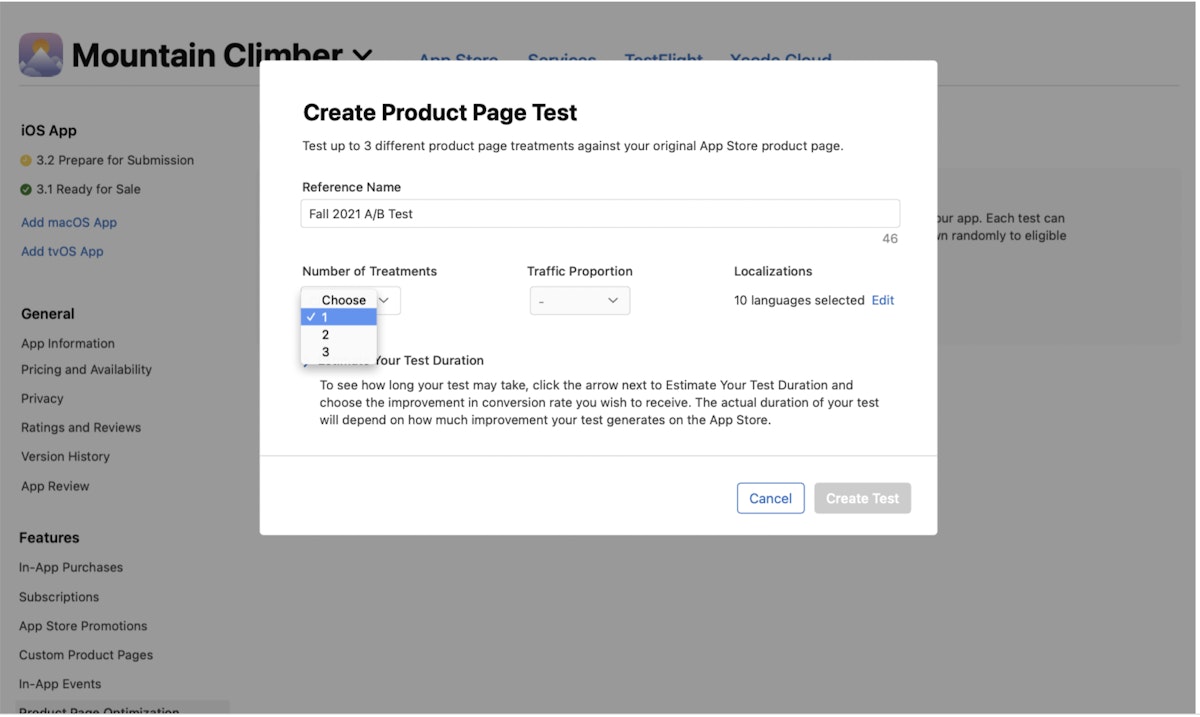

Step 1: Create test

To create a test, select “Product Page Optimization” on the left menu. Click “Create Test” if you haven’t previously created a test, or hit the add (+) button beside “Product Page Optimization.”

.png?auto=format,compress&q=75&w=1200) Source: Apple Developer

Source: Apple Developer

Step 2: Give your test a name

Create a test name (up to 64 characters). Make it descriptive so you can easily identify your test when viewing results in App Analytics. For instance, “Easter 2022 Screenshot Characters Test with 3 characters.”

Step 3: Choose the number of treatments

You can include up to 3 treatments.

Source: Apple Developer

Source: Apple Developer

Step 4: Determine your traffic allocation

Choose the proportion of users who will be randomly selected to be shown a treatment instead of your original App Store product page. We recommend you split traffic between your default product page and the different treatments equally.

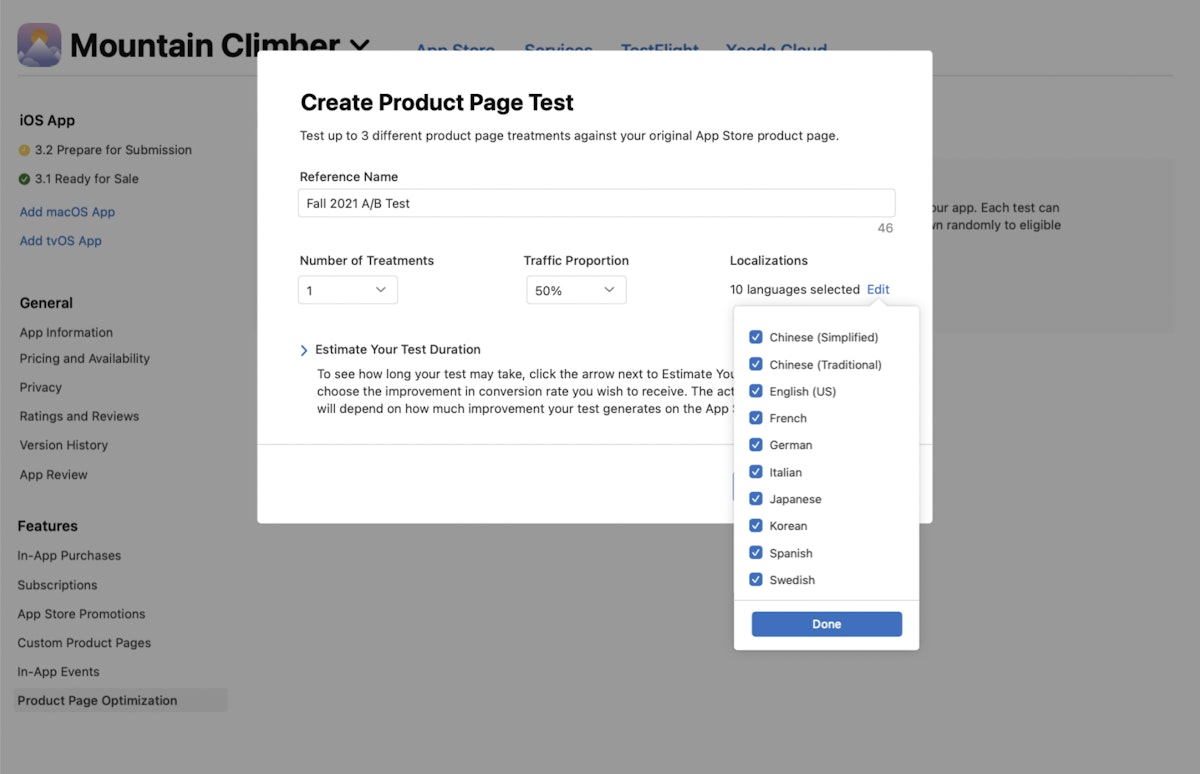

Step 5: Select the localization

If you are localizing the test, select the locale. Only locales within the current app version can be included in the experiment.

Source: Apple Developer

Step 6: Calculate your test duration

To estimate the time for you to achieve your goal, click on “Estimate Your Test Duration” and choose the improvement that you desire in the conversion rate. The test will run for a maximum of 90 days to help you determine if you have reached your desired results within that time. You can also manually end the test in that time span. Note that the more treatments you add to a test, the longer it may take to reach definite results.

Source: Apple Developer

Source: Apple Developer

Step 7: Launch your test treatments

Click “Create Test.” Try to limit the number of elements you want to change in a treatment at a given time so that you can easily identify the one that actually impacted the results. All your creative assets have to be accepted by App Review before you can use them. However, unless it is an app icon, you do not need to release a new version to launch the test.

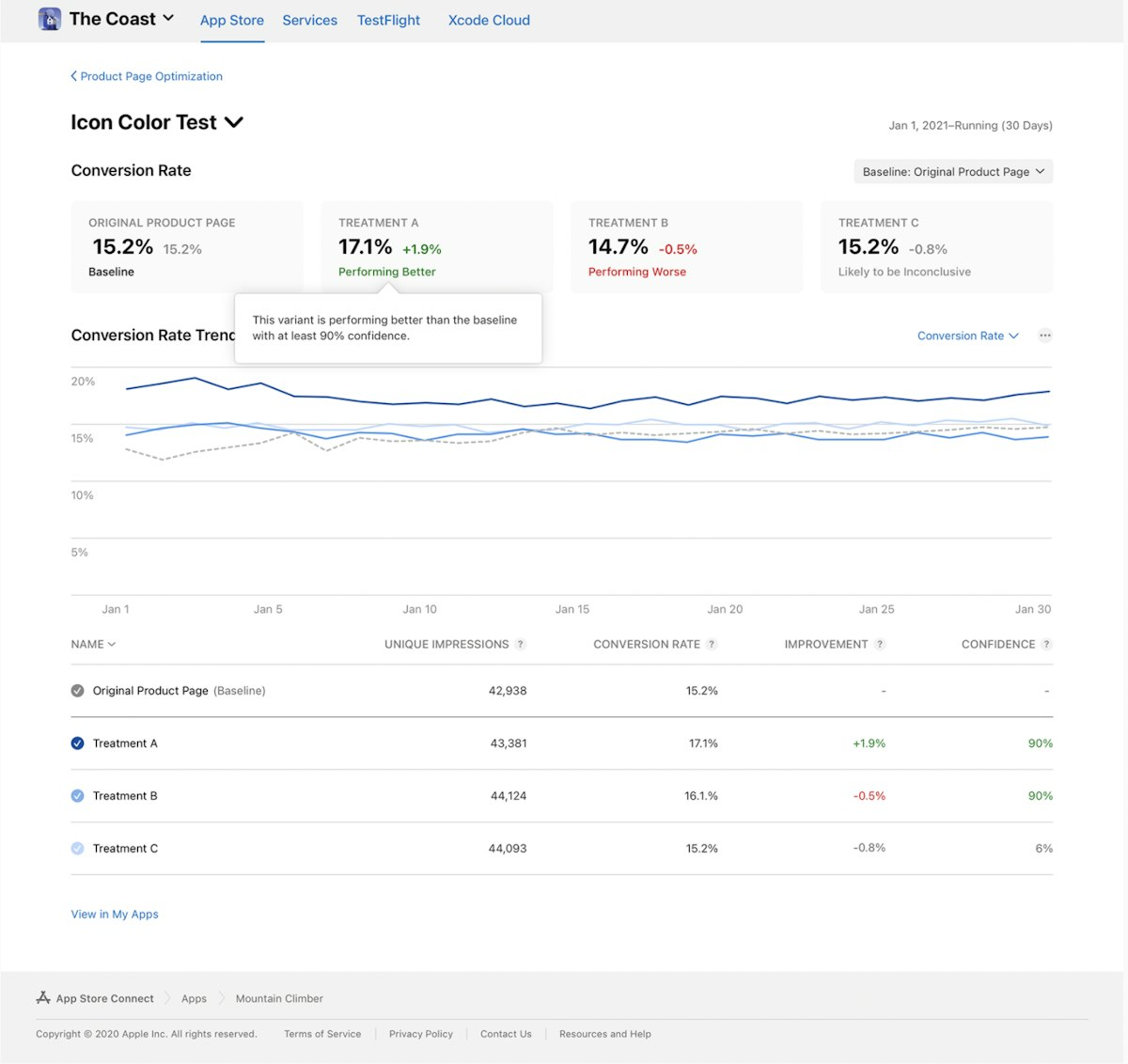

Step 8: Monitor your test

On App Analytics in App Store Connect, you will be able to evaluate performance metrics – such as conversions, unique impressions, improvement, confidence level, etc. – a day after running the test. You can measure these metrics against your baseline, which, by default, is your original product page.

Step 9: Implement the treatment

On the basis of your test results, you may want to deploy a treatment to your original product page so that it shows to all the App Store visitors. Applying a treatment while your test is still running will end the current test. Therefore, before hurrying to implement a treatment or ending a test, we recommend waiting and observing whether at least one treatment performs better or worse than the current baseline.

Source: Apple Developer

Source: Apple Developer

Best practices for A/B testing on the App Store

We have put together a few best practices to help you best take advantage of product page optimization (PPO).

1. Set up your tests based on the strength of your hypothesis

Carry out internal and external research to know your product strengths, best practices, and industry trends. Next, come up with a defined hypothesis and a predicted outcome. Ask yourself how the hypothesis could affect the user and/or which stakeholders may profit from the test results. For instance, testing a new background color can be either minor or significant depending on why the new color is being tested (for example, to assess whether users prefer a particular color or to make your screenshot elements more clear).

2. Do not replicate Google Play’s A/B strategy for iOS

Another important consideration for A/B testing on iOS is to test different creatives than you would on Google Play. Apple and Google are two diverse platforms with marked differences in user behaviors, app popularities, and – most importantly – user interfaces, meaning that identical creatives would not be seen the same way.

3. Do not be in a hurry to end the test too early

It is ideal to wait until the console completes the test. For instance, if a game’s top users are more active on the weekend and you stop a test only 3 days after starting it on Monday, the results will not be accurate and might, in fact, affect your future test cycles. We, thus, recommend running tests for at least one week and in increments of 7 days.

4. Look out for external factors that can impact installs

All factors that may impact the amount of traffic you receive on the App Store can negatively impact the results of your A/B test. Marketing efforts and seasonal events might change the quality of the traffic you receive and change your conversion rate unexpectedly. In turn, you might think the change in conversion rate was caused by the A/B test when, in fact, it was caused by external factors.

5. Optimize for the right audience

Choose the right audience for your app or game. Knowing your users and where they come from can help a great deal in creating the right optimization techniques. Also, if your app/game is available in other markets, localizing or culturalizing your app store assets to specific locales or cultures is an important consideration for test plans.

6. Test one hypothesis at a time

It’s best to test only one hypothesis at a time to be able to say what exactly caused the increase or decrease in conversion rate. This means you change multiple elements at a time as long as each of these changes supports your hypothesis. While testing, also ensure that your test variations are notable and significant enough to make a difference. Minor changes might not lead to desired results.

7. Iterative testing

Your optimization work is not a one-off. Remember that not all experiments produce a positive result. In that case, reassess your testing plan and test again. Analyze your test results and identify the treatment that performs the best. Once you get conclusive results, update your product page with the better-performing creative. Remember that even a variant that performs worse than the original may provide insights about your audience and should not be disregarded.

Conclusion

Product page optimization on the App Store is a useful tool to test the effectiveness of your app’s creative changes in a data-driven way. It is also the only tool that allows you to administer your different variants to live App Store traffic, resulting in less biased samples (and results).

Preparing your PPO tests by establishing a solid hypothesis and following these best practices is key to creating quality A/B tests. If done correctly, A/B tests can help you improve your conversion rate and/or gain useful insights about your audience that can inform your marketing efforts.

Visualize and monitor your competitors’ metadata updates, A/B tests, PPO, in-app events, and more with AppTweak.

Anthony Ansuncion

Anthony Ansuncion

Taya Franchville

Taya Franchville

Georgia Shepherd

Georgia Shepherd