How to get your app discovered in ChatGPT and LLM search

AI search is creating a new layer of app discovery before users ever reach the App Store or Google Play. The opportunity for app marketers is not to replace ASO, but to understand how LLMs evaluate apps, which signals they trust, and how that changes what it means to be discoverable.

In our recent webinar How to get your app discovered in ChatGPT and LLM search we discussed with experts from Reddit and Yodel Mobile actions app marketers can take now. Attendees found the insights incredibly useful for preparing their strategy for AI visibility for apps, so we wanted to share them here.

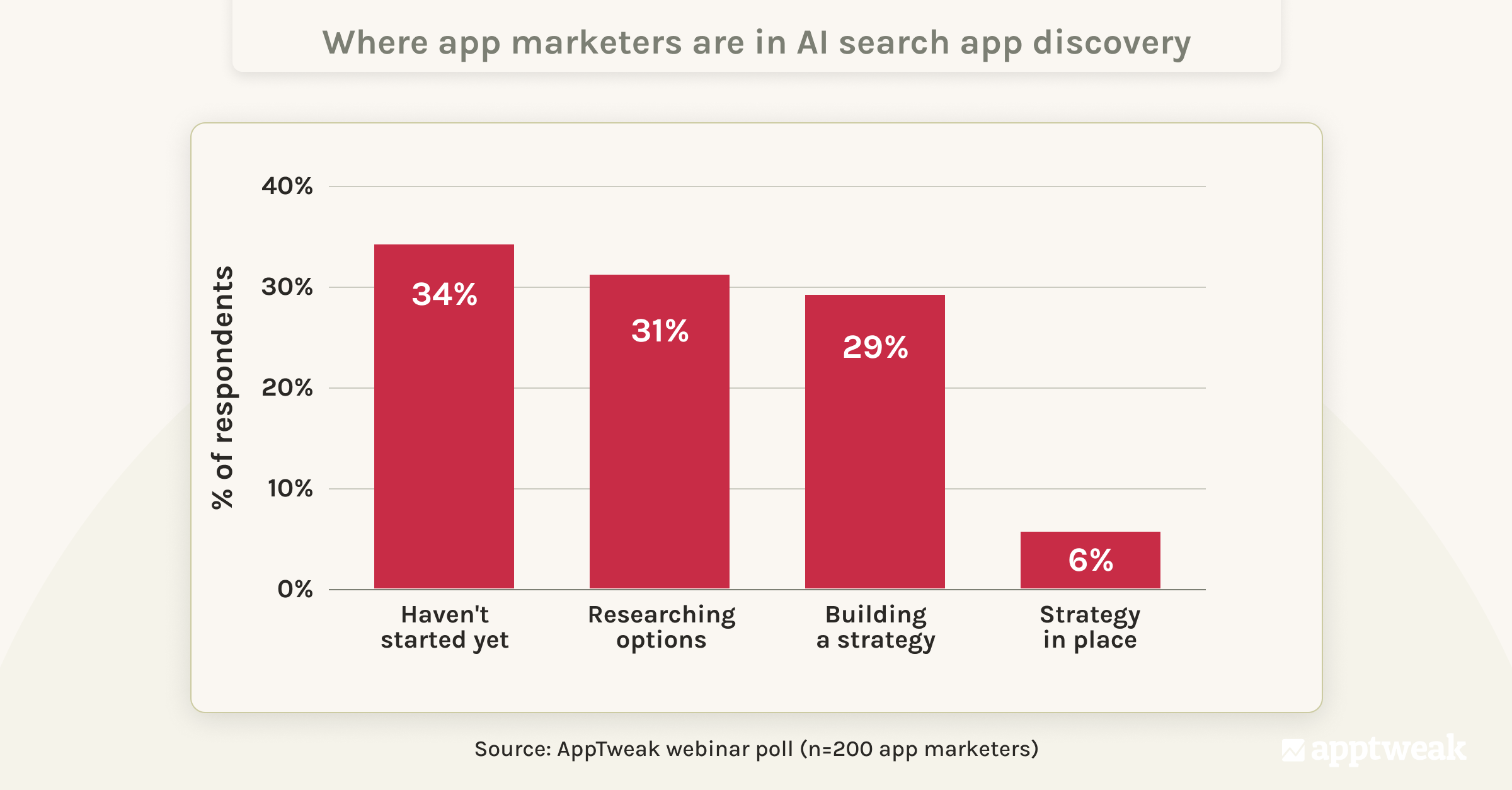

While interest in AI-driven app discovery is growing quickly, most app teams are still in the early stages of understanding and acting on it.

As you can see from AppTweak’s webinar poll, while interest is growing, most app marketers are still early in their AI visibility journey in 2026: 34% have not started at all, 31% are researching options, and 29% are in the process of building a strategy. Only 6% report having a strategy already in place.

This creates a clear opportunity. As AI-driven discovery becomes a more important part of the user journey, teams that start building understanding and strategy now will be better positioned as the space matures.

Key takeaways

- LLM discoverability is becoming a new upstream layer in the app discovery journey, shaping which apps make it into consideration before users search in the store.

- LLMs evaluate apps through intent, context, and supporting evidence across the web, not through app store rankings alone.

- Community discussions, especially around real user problems and comparisons, can influence whether an app is surfaced in AI-generated recommendations.

- AI visibility is probabilistic, not position-based. The goal is not to “rank #1” in ChatGPT, but to increase the likelihood that your app is one of the most frequently mentioned for the right intents.

- For most app teams, the priority today is not building a full “AI playbook.” It is understanding how their app is currently represented, which sources influence recommendations in their category, and whether their positioning is consistent across the web.

App discovery is expanding beyond the store

For years, the dominant app discovery path was fairly straightforward. A user knew they wanted an app, went to an app store, searched a keyword or brand, and evaluated results there.

That journey is starting to fragment.

Today, more users are formalizing intent outside the store first. They ask ChatGPT, Gemini, Claude, Perplexity, or AI-powered search experiences which app they should use for a specific need. Only after getting a shortlist do they open the App Store or Google Play to evaluate screenshots, reviews, pricing, and fit.

The app stores are still the primary conversion environment. But they are no longer always the first place where users decide which apps deserve consideration. Increasingly, user research starts upstream in AI engine search, which can consider app metadata but also web search, communities, and reviews. This shift matters because it changes where consideration begins.

While this shift is most visible in AI search engines, search algorithms in app stores are also getting better at interpreting intent and context. For a deeper dive, read How AI is changing relevance in app store search.

How LLMs evaluate and surface apps

LLM-based discovery does not work like traditional app store search or classic SEO. It is less about exact keyword matching and more about whether the model can understand your app as a credible solution to a user’s problem.

In AppTweak’s internal AI-era discoverability research, we describe this as an intent-first system. AI engines interpret what the user is trying to do, retrieve relevant information from multiple sources, and synthesize an answer based on how well different options match that intent. They do not rely solely on popularity signals. Instead, they tend to surface apps that are clearly described, well-supported across sources, and closely aligned with the user’s request.

LLMs start with the problem, not the keyword

When someone asks for “the best budgeting app for students,” the model is not just scanning for apps that contain the words “budgeting” and “students.” It is trying to interpret the task behind the prompt.

What is the user actually asking for?

A simple expense tracker?

A financial planner?

Something free?

Something beginner-friendly?

Something made for college life?

This is why LLM discoverability is closer to recommendation logic than ranking logic. The system first interprets the user’s intent, then evaluates which apps best fit that need. Only then does the LLM generate a recommendation.

Your app needs to be considered before it can be recommended by an LLM

One of the most useful distinctions from the webinar discussion is that in LLM environments, AI visibility often depends on whether your app is considered a relevant option in the first place.

Before an app can be recommended, it needs to be considered a plausible option for that prompt. This is influenced by whether your app is consistently associated with the relevant use case across the sources the model can access or retrieve.

In practical terms, that means your app needs to be clearly and consistently represented across multiple sources:

- Your company website and owned content

- Your app store listing (descriptions and visible content)

- Community discussions

- Review and comparison content

- Editorial content and media coverage (press articles, “best apps” list, etc.)

These sources act as evidence that LLMs can retrieve, compare, and synthesize when generating recommendations. The more consistently your app is associated with a specific problem across these sources, the more likely it is to be surfaced as a relevant option.

Because these signals vary by model, category, and source, there is no single static view of your app’s visibility. The most practical way to start understanding how your app is surfaced today is to check it directly across different prompts and platforms.

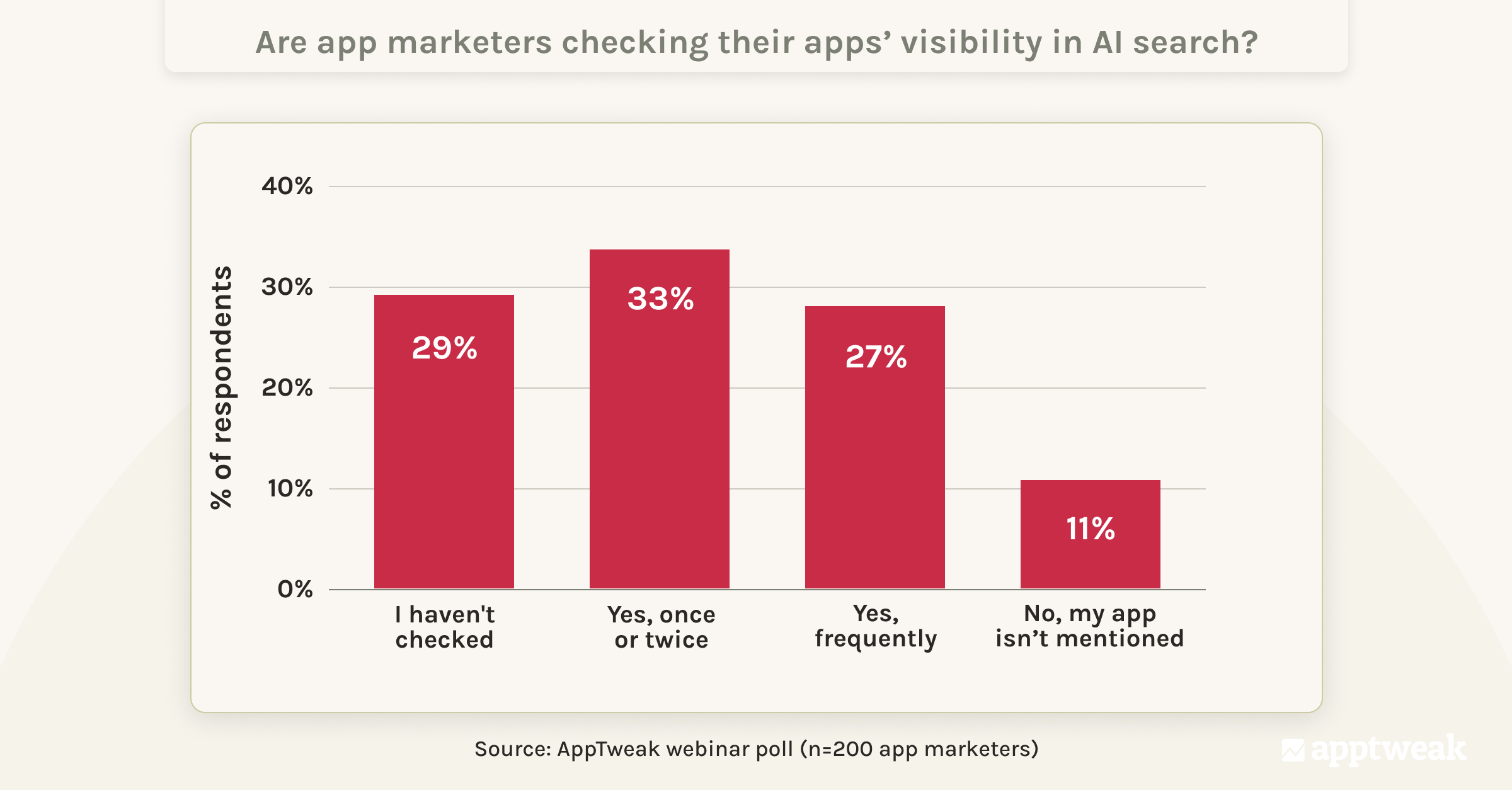

Yet many app marketers are still not actively doing this.

Our webinar poll highlights this gap clearly. While 33% of respondents have checked their app’s visibility once or twice, only 27% report doing so frequently. At the same time, 29% have never checked at all.

Without actively checking how your app is surfaced in tools like ChatGPT or Perplexity, it’s difficult to understand where you appear, which competitors are being recommended instead, and which sources influence those outcomes.This is why the first step is building visibility into how your app is currently surfaced.

How app store signals influence LLM recommendations

While LLMs draw from a wide range of sources, your app store listing still plays a key role — especially because it provides structured, high-confidence information about what your app does.

App store listings are not always directly retrieved by LLMs, but they can contribute to how an app is represented across the web and understood through training data or retrieval.

Based on our early observations, here’s how different elements of your app store presence influence LLM recommendations.

| How app store signals contribute to LLM understanding and recommendations | ||

|---|---|---|

| App store signal | Contribution to LLM understanding | Why it matters |

| Core positioning (what the app does) | Can contribute (when accessible) | Helps LLMs match your app to specific user intents |

| Use case descriptions in long descriptions | Can contribute (when accessible) | Provides context for who the app is for, and when and why it should be recommended |

| User feedback & real-world usage | Supporting | Can reinforce credibility and real-world context when surfaced in retrieved content |

| App classification | Supporting | Helps link your app to a specific category or vertical |

| Visual elements | Limited | Secondary to text for most LLMs today |

| Hidden keyword fields | None | Not directly visible to LLMs |

| App store performance metrics (e.g. downloads, conversion rate, retention) | None | Not directly visible to LLMs |

Note that LLMs rely on publicly available, text-based content, not hidden fields or internal app store data.

While your app store listing helps reinforce how your app is understood, it is rarely the primary driver of LLM visibility on its own. LLM recommendations are typically shaped by a broader set of web and community signals. That said, a clear and well-structured app store listing remains critical for conversion, as users still rely on it to evaluate and validate apps before installing.

Why community discussion matters so much

One insight from the webinar is the observation that online communities can play an outsized role in how apps get surfaced, especially when users ask comparative or recommendation-style questions.

That is not surprising. LLMs are especially good at synthesizing discussion-rich, opinion-rich content when the query is subjective or contextual. Recommendation prompts like “best running app for beginners” or “best dating app for serious relationships” are not purely factual. They require defensible judgment.

This is where communities become valuable. They give the model more than mentions. They give it context.

For example, on Reddit, a broad “beginner running” discussion surfaced popular apps like Strava, MyFitnessPal, and Nike Run Club. But a more specific “couch to 5K” community surfaced a different set of apps because the underlying user need was narrower and more beginner-specific.

The difference lies in how apps are associated with specific user needs, not just how popular they are. Each discussion reinforces different associations between apps and particular use cases. In a broad category, well-known apps tend to dominate. But in more specific contexts, different apps can emerge because they are more closely aligned with that particular need.

The lesson: nuance matters. The apps associated with a broad category are not always the ones associated with a specific intent within that category. For LLMs, this means recommendations are shaped by how clearly an app is tied to a particular use case, not just how widely it is known.

What early signals are telling us

While it’s still early, several signals are already becoming clear in how LLMs surface app recommendations.

1. App store rank and AI visibility are not the same thing

While a strong app store ranking can indirectly help AI visibility because it often correlates with broader brand visibility, more reviews, more editorial mentions, and more web coverage, it’s not a direct proxy for LLM discoverability. We’ve observed that some apps appear disproportionately often in AI-generated answers and that app store rank does not map perfectly to AI visibility.

An app can rank highly in the store and still be surfaced inconsistently in LLM answers if its positioning is vague or if the broader web does not strongly associate it with a specific use case. Conversely, a smaller or more niche app can appear frequently in AI-generated recommendations if it has a clearer, more defensible association with a particular problem.

2. Not all mentions are equal: depth and trust often matter more than volume

A lot of marketers instinctively ask whether mention volume is the main thing that matters. The more useful answer is that volume helps, but trust makes recommendations durable.

From the webinar discussion, one important observation was that older, richer discussion threads often appear more trustworthy than brand-new promotional content. Not simply because they are old, but because they show layered dialogue, disagreement, follow-up, and accumulated validation over time.

In AI environments, that matters. A thread with depth, nuance, and real human trade-off discussion gives the model more defensible material than a shallow promotional mention.

This can create a challenge for apps that evolve quickly and want newer features to be reflected in AI-driven results. Rather than trying to replace existing conversations, a more effective approach could be to contribute to them—framing new features within the context of ongoing discussions, so they build on established, trusted narratives instead of starting from scratch.

This mirrors a broader principle from our AI discoverability research: AI engines prefer grounded, specific and, reasoning-ready content over vague, purely promotional material.

3. Structured, intent-aligned web content is easier for LLMs to reuse

Another early signal is that LLM-friendly content formats, particularly those published on the web, tend to be highly structured

Common formats that are easier for LLMs to retrieve and synthesize include:

- Question-and-answer pages

- Comparisons

- Best-of lists

- Problem-solution pages

- Clear pros and cons

- Pages written around one distinct intent

These formats work well because they clearly map content to a specific user intent, making it easier for LLMs to extract and reuse relevant information.

That does not mean every brand should rush to publish generic “top 10 apps” listicles. It means that when content is clearly organized around a user problem and written in direct, factual language, it is easier for an AI engine to retrieve, interpret, and synthesize.

4. The sources that matter vary by category and audience

One of the best practical takeaways from the webinar is that there is no single source strategy for every app category. Different verticals pull from different ecosystems. Different models also rely on different source mixes. And the surfaces that matter may vary depending on your audience.

For example:

- Younger audiences may lean more toward social and AI assistants directly

- Older audiences may encounter app discovery through AI-powered search experiences in browsers

- Ecommerce-style recommendation patterns may pull more heavily from UGC and review ecosystems

- Some prompts may favor editorial and comparison content

- Others may favor communities

Expert Tip

It’s essential to first identify where LLMs source recommendations for your category before launching new AI visibility initiatives.What app marketers can do now to increase AI visibility

Although we’re in early days, app marketers who move first to increase their apps’ AI visibility will gain the competitive advantage. Here are things you can do now.

1. Start by auditing how LLMs understand your app

Before changing messaging, websites, or metadata, look at how your app is already being surfaced by ChatGPT, Claude, Gemini, and other AI search engines.

What prompts bring it up?

What prompts do not?

Which competitors appear more consistently?

What sources are being cited?

Are those sources communities, brand pages, review pages, comparisons, or app store pages?

This is the foundational step because you cannot improve AI visibility until you understand the source patterns in your category.

2. Position your app around the problem it solves

Feature-heavy messaging alone is rarely enough in LLM search. Think about how users interact with tools like ChatGPT; they describe what they want to do in natural language.

If your app is described differently across your website, app store pages, social content, and community presence, the model has weaker signals to associate it with a specific need. If those sources repeat a consistent framing around the user problem you solve, your likelihood of being recommended increases.

Expert Tip

A simple test is this: if someone asked an LLM about the problem your app solves, would your app consistently appear as a recommended solution across different sources?

If not, your issue is probably not “AI visibility” as such. It’s positioning clarity.

3. Treat community participation as a discoverability input

Many app teams still think of communities mainly as brand or support channels. In AI-driven discovery, they are also becoming part of the recommendation layer.

That does not mean spamming communities with promotion. It means showing up where real discussions already happen, understanding unmet needs, correcting outdated perceptions when appropriate, and contributing authentically.

The strongest panel advice on community participation is this: be helpful first. That is not just good community behavior. It is also more likely to create the kind of durable, trustworthy discussions that AI search engines can later reuse.

AI visibility starts with intent, not ranking

One of the most important shifts is to stop thinking about LLM discoverability like a classic ranking problem as we do in the app stores or for SEO.

Instead, app marketers should ask “for which user intents is my app a credible answer?”

This framing better reflects how LLM systems work. They do not simply rank a fixed index of apps. They generate recommendations by combining signals from multiple sources and selecting options they can reasonably support based on the user’s request.

In practice, that means visibility is less about position and more about association.

So the more useful questions to ask regarding AI visibility are:

- Where is our app clearly understood as the right solution to a specific problem?

- Where is it mentioned, but not strongly tied to a clear use case?

- Where is it missing entirely from relevant conversations?

These questions will help to set up your strategy for AI visibility.

Conclusion: ASO still matters but the discovery journey is expanding

AI search is not replacing ASO. It is expanding the discovery journey around it.

Users still install from the store. App store pages still matter. Conversion still matters. But the path into consideration is changing. As AI search engines, communities, and search experiences increasingly shape which apps are evaluated in the first place.

As a result, app discoverability is becoming more distributed, more intent-driven, and more dependent on how clearly your app is understood across sources.

For app marketers, that means the goal is no longer just to be searchable. It is to be recommendable.

And that starts with being clearly associated with the right user intents.

FAQs

Why do community discussions influence AI-generated app recommendations?

Community discussions can have a strong influence on LLM answers because they provide context, not just mentions.

In the webinar, one key observation was that AI systems are particularly effective at synthesizing discussion-rich content—especially for recommendation-style queries that involve trade-offs, preferences, and real-world use.

A forum thread, Reddit discussion, or user comparison can help a model understand:

- which app solves which problem

- for which type of user

- and what trade-offs are involved

These elements give AI search engines more grounded, contextual information to draw from, compared to isolated or purely promotional content.

If the App Store description historically had little impact on keyword rankings, could AI-driven search make it more important for app discovery?

It’s possible, but not yet proven.

App store descriptions contain structured, text-based information about what an app does, which could help reinforce how it is understood when that content is accessible to AI systems—whether through web indexing, training data, or retrieval.

In practice, descriptions can help by:

- clearly defining the app’s core use cases

- explaining who the app is for and when it should be used

- reinforcing positioning that appears across other sources

However, there is no clear evidence that app store descriptions are a primary driver of LLM recommendations today. Most AI-generated recommendations appear to be shaped more strongly by broader web and community signals.

For now, app store descriptions should be treated as a supporting signal. They can strengthen how your app is described and understood, but their impact depends on how consistently that positioning is reflected across the wider ecosystem.

Should app marketers use the same messaging on their website, app store listing, and community channels?

Your app’s core positioning should remain consistent, even if the format and tone vary by channel.

When multiple public sources describe your app in a similar way—highlighting the same core use case, audience, and problem solved—it reinforces how the app is understood and associated with specific user intents.

That does not mean every channel should use identical wording. Your website can go deeper, your app store listing can focus on conversion, and community participation can be more conversational. But the underlying framing should stay aligned.

If different sources describe your app in conflicting ways—for example, as a budgeting coach in one place, a finance tracker in another, and a student discount tool elsewhere—it creates weaker and less consistent signals about what your app is actually for.

Consistency across owned content, store pages, and community discussions helps reinforce clearer associations between your app and the problems it solves.

Georgia Shepherd

Georgia Shepherd

Alexandra De Clerck

Alexandra De Clerck

Ryan Angerami

Ryan Angerami