Spy on Your Competitors’ A/B Tests

With our new A/B testing feature, AppTweak is giving you more insights than ever! Have you ever wondered what elements your competitors were testing in their metadata? Now, you can directly see which apps are running A/B tests, on which metadata, and what version they decided to keep. Read the blog below and start fine tuning your strategy.

Expert Tip

AppTweak has expanded its features to include support for Apple’s product page optimization (PPO). With PPO data on AppTweak, get valuable insights into your competitors’ mobile marketing tactics. Discover the creative strategies they are experimenting with and identify the elements that most effectively engage your target audience.What is A/B testing?

A/B testing is a process that allows you to test two versions of the same variable to see which one performs better. It’s very often used in marketing to test web pages, ad creatives, emails, newsletters and more.

Apps can also A/B test in order to test their metadata (for example, title, subtitle, description, feature graphic, icon, screenshots, and videos).

The important difference between an A/B test and an update is that an update is a version that did not go through a period of testing. When you directly upload a new version without testing it, it can be less expensive for the company but you may select a version that will not seduce your customers as much as another could have.

You might be unsure about how many images to test and for how long. Our new feature in the Timeline section can help you by analyzing your competitors’ A/B testing strategies and guiding you in developing your own.

Our A/B testing algorithm

Now that we have cleared up some definitions, we can dive deeper into how this feature works.

In the timeline section of the tool, you may have seen different coloured dots representing: the updates (green), the A/B tests (orange), and an A/B test on a metadata element plus an upgrade on another one (green and orange).

What we consider an update

Our algorithm will see if a metadata (icon, description, title, etc.) has been updated one day and then, it will look if during the following two days, there was another update for the same metadata. For instance, if an icon has been updated once and then, during the next two days, has not been updated, then it is considered an update.

What we consider an A/B test

On the other hand, if the algorithm sees another update within the next 2 days time, we will consider that the app developers are A/B testing their app’s metadata. Therefore the dots will be orange.

Of course, you will see that, some days, apps will do an A/B test on one metadata and an update for another metadata. This is why, you will find two coloured dots in the Timeline.

In order to get this data, we fetch the data of an app everyday and store it in our database. The A/B tests are identified following this algorithm but it is possible that an A/B test, having a different behavior from the one we analyze, will not be detected.

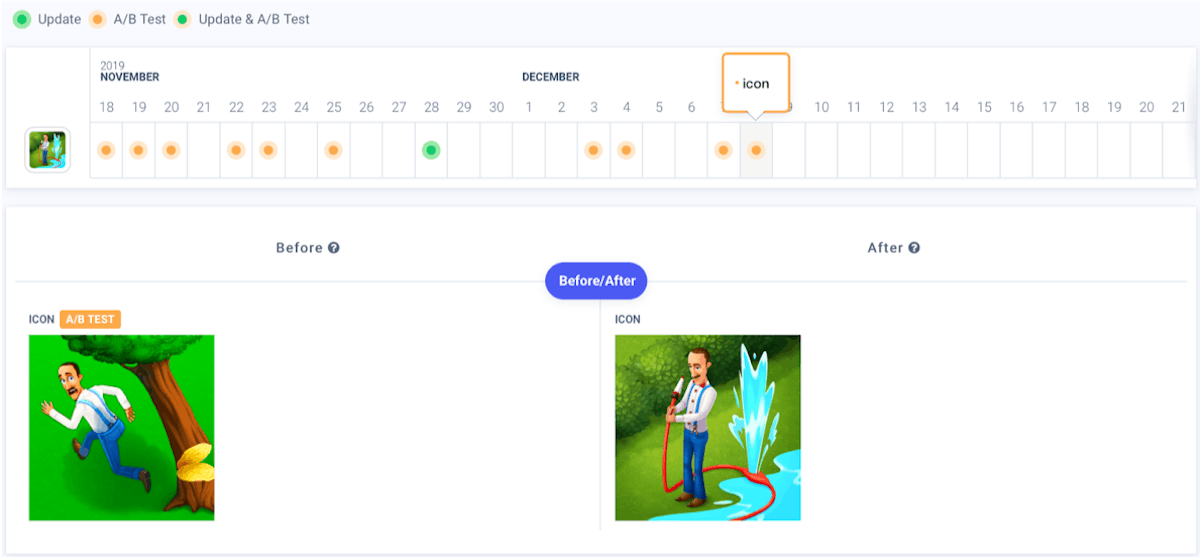

You may think you will find yourself lost with all these coloured dots but if you want more precisions on what the A/B testing looks like, you can click on the dot of your choice and all the details will be shown in a table below.

We looked at the icon changes for the app Gardenscapes and you can see between the 18th of November and the 8th of December, the app went through two A/B testing phases. They kept the one they selected on the 8th of December.

Learn 10 tips to make your app icon stand out

Understand A/B testing strategies with AppTweak

1. Discover what & how often competitors are A/B testing

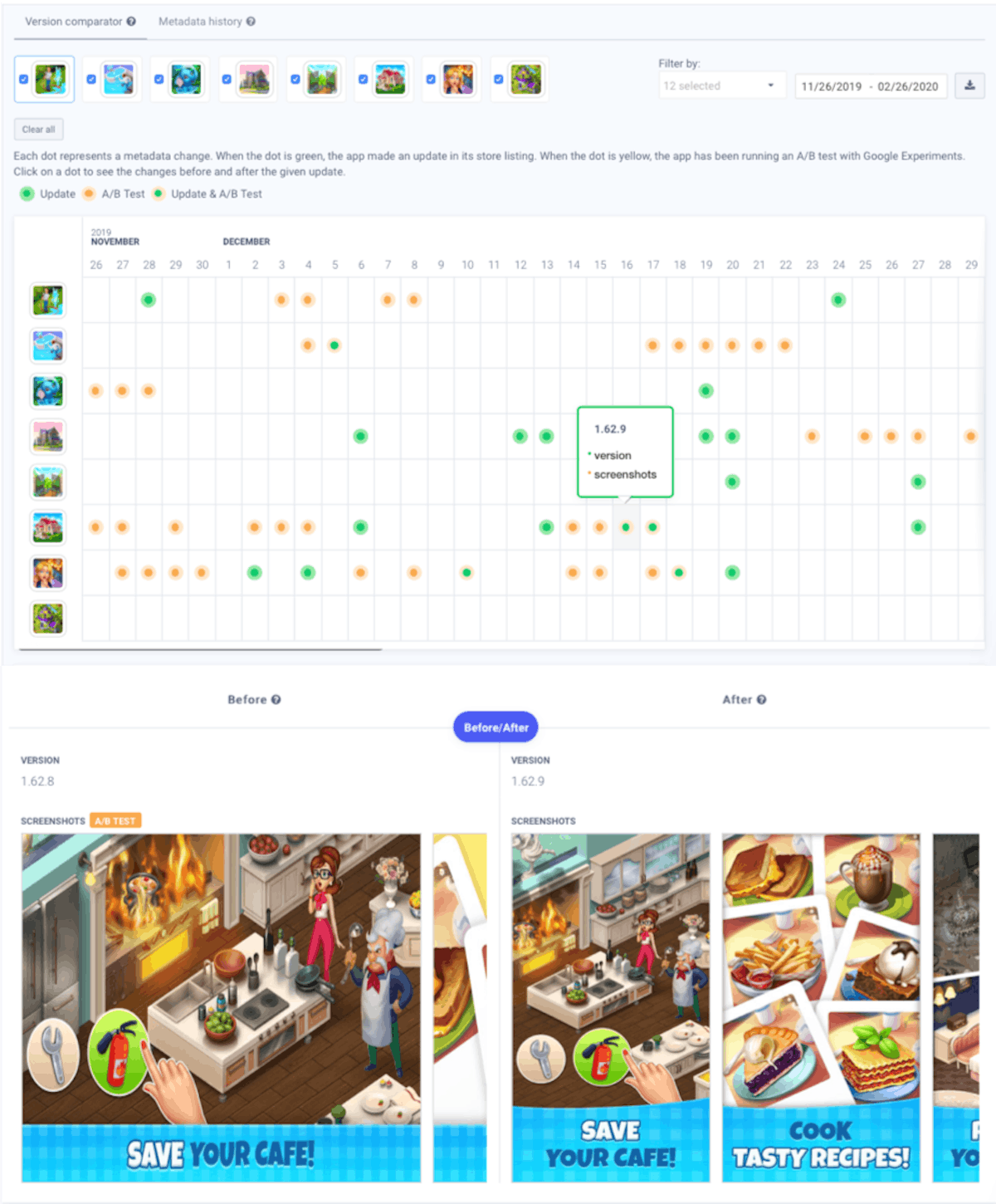

With our colour code, you can directly identify in our Timeline if an app has been A/B testing. When you hover over the dot, you can further identify what element they are A/B testing. When you click on a dot, you can even see which versions they are testing.

Here, We took 8 gaming apps in the US (Gardenscapes, Homescapes, Wildscapes, Lily’s Garden, Royal Garden Tales, Manor Cafe, Matchington Mansion, Butterfly Garden Mystery). We selected all the metadata changes and looked at the changes on December 16. You also can see that by clicking on one dot you have the detail for this date (here we clicked on one of Matchington Mansion’s dot on December 16).

If you have a group of competitors, it is possible that some will A/B test one metadata more than others. Within this group, you can learn from each A/B test by your competitors.

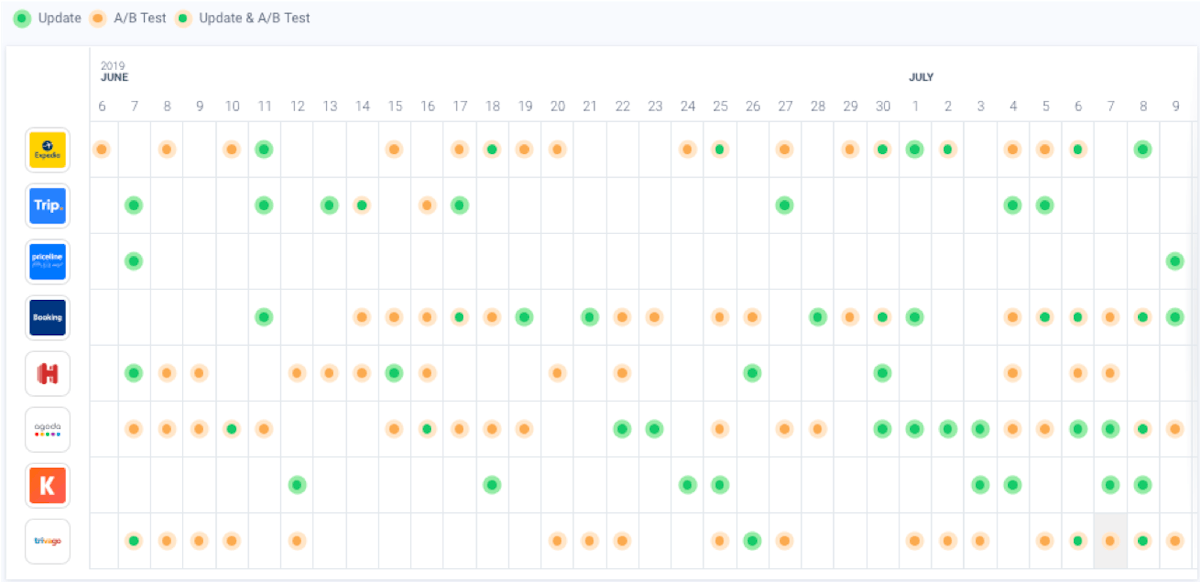

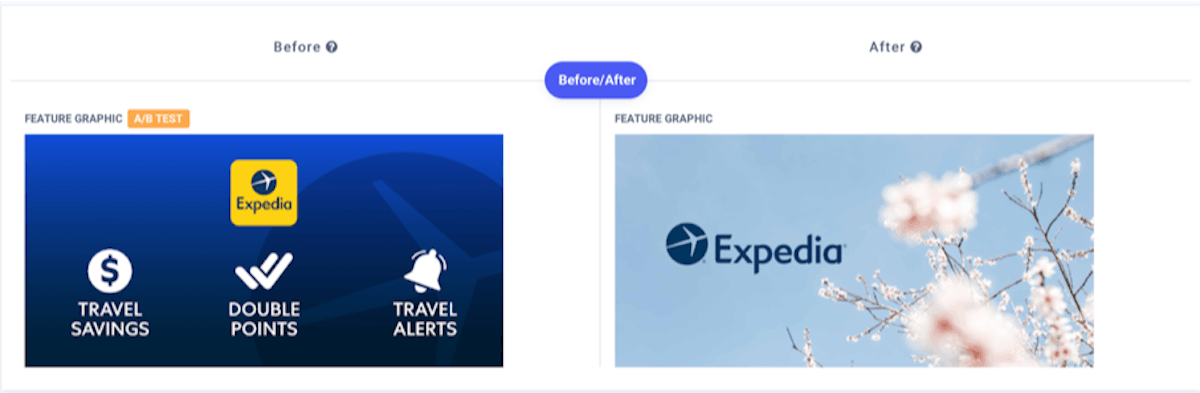

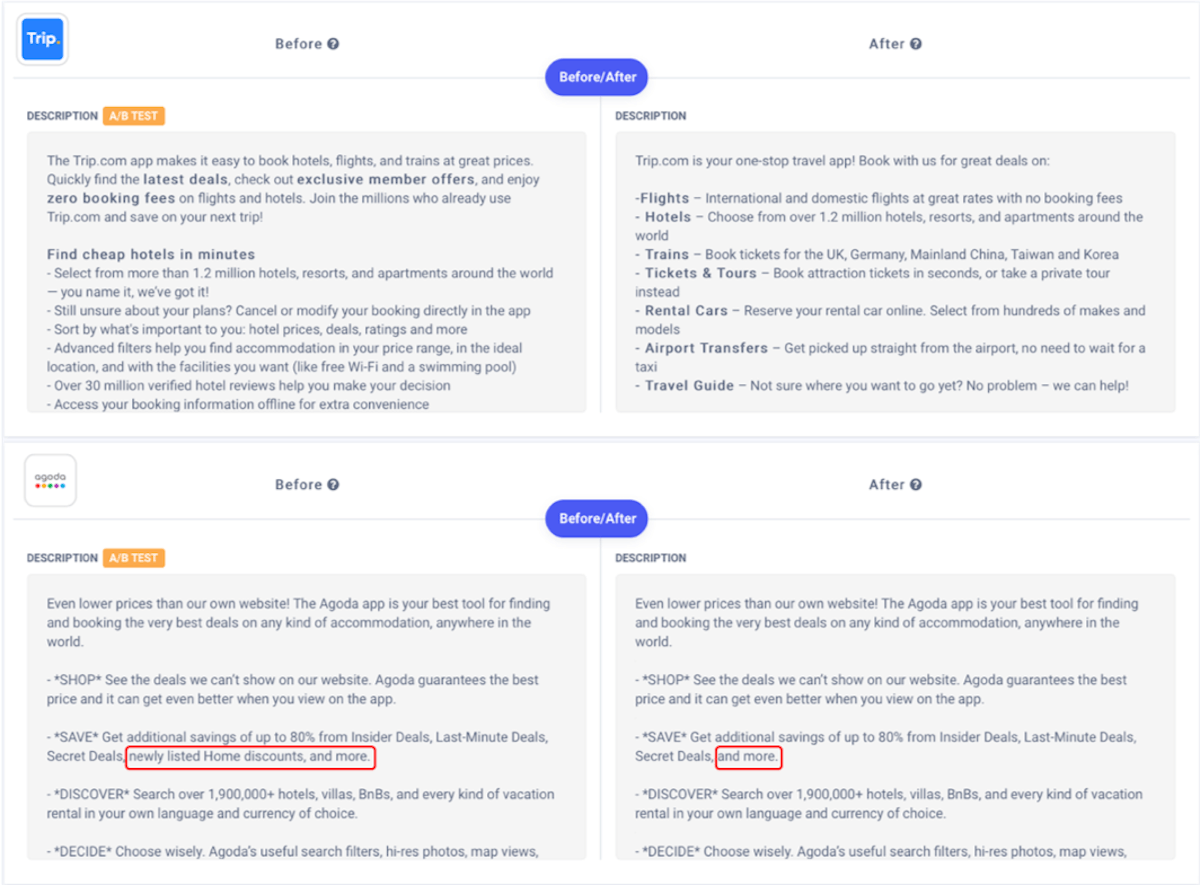

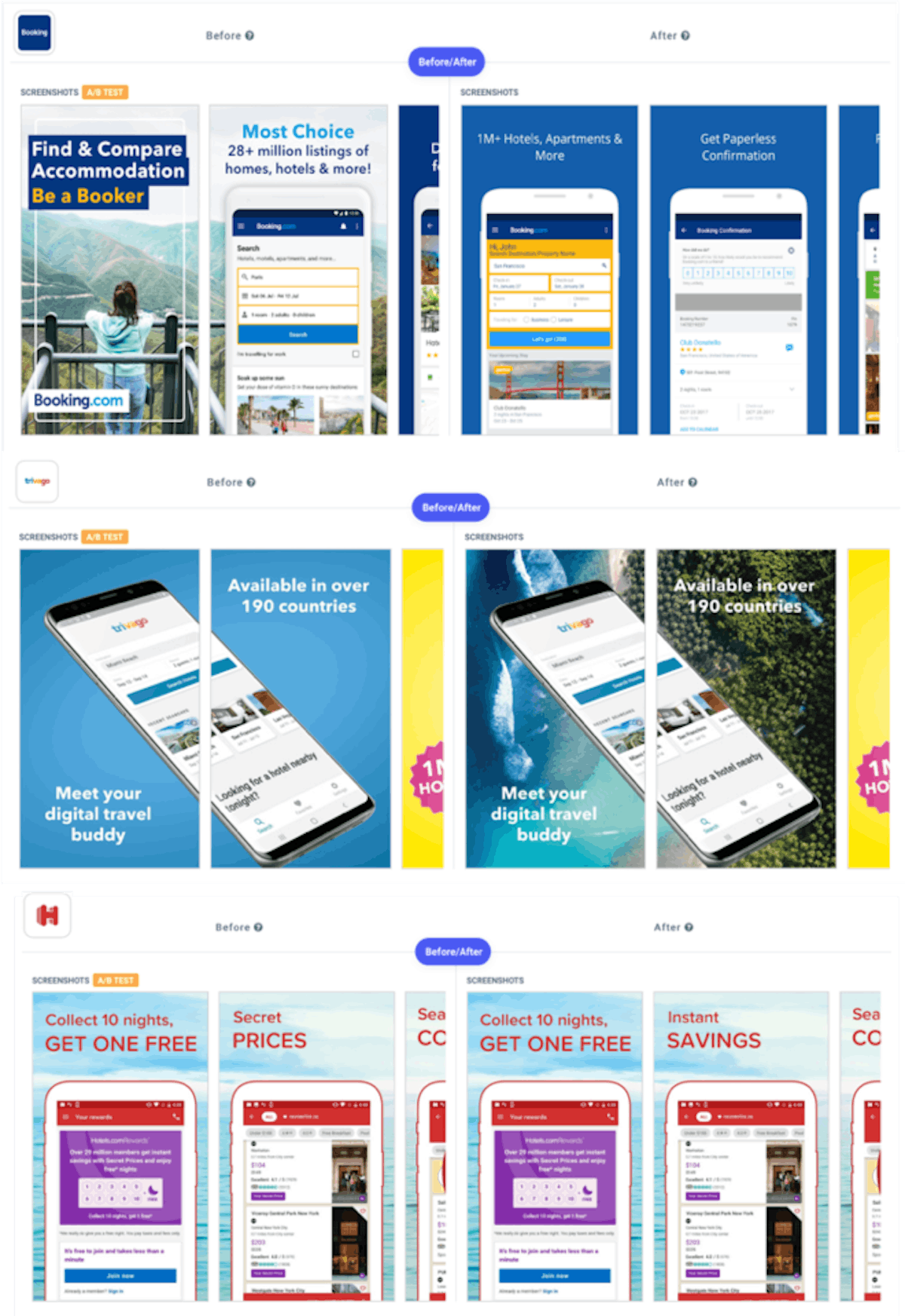

Here, for example, we selected a peer group of traveling and booking apps (Expedia, Trip.com, Priceline, Booking.com, Hotels.com, Agoda, KAYAK and Trivago). They are all competing in the same category, but each is A/B testing different metadata right before the summer.

On this period, Expedia is testing its feature graphic to see if a quick overview of their offer is better than an open-air photo.

Trip.com & Agoda are working on their app description. Trip.com changed their entire text structure, while Agoda is trying to see if including “newly listed Home discounts” would make a difference.

Booking.com, Hotels.com, and Trivago are testing their app screenshots. Booking.com and Trivago are testing the background picture, while Hotels.com looks at the best keywords between “secret price” and “instant savings.”

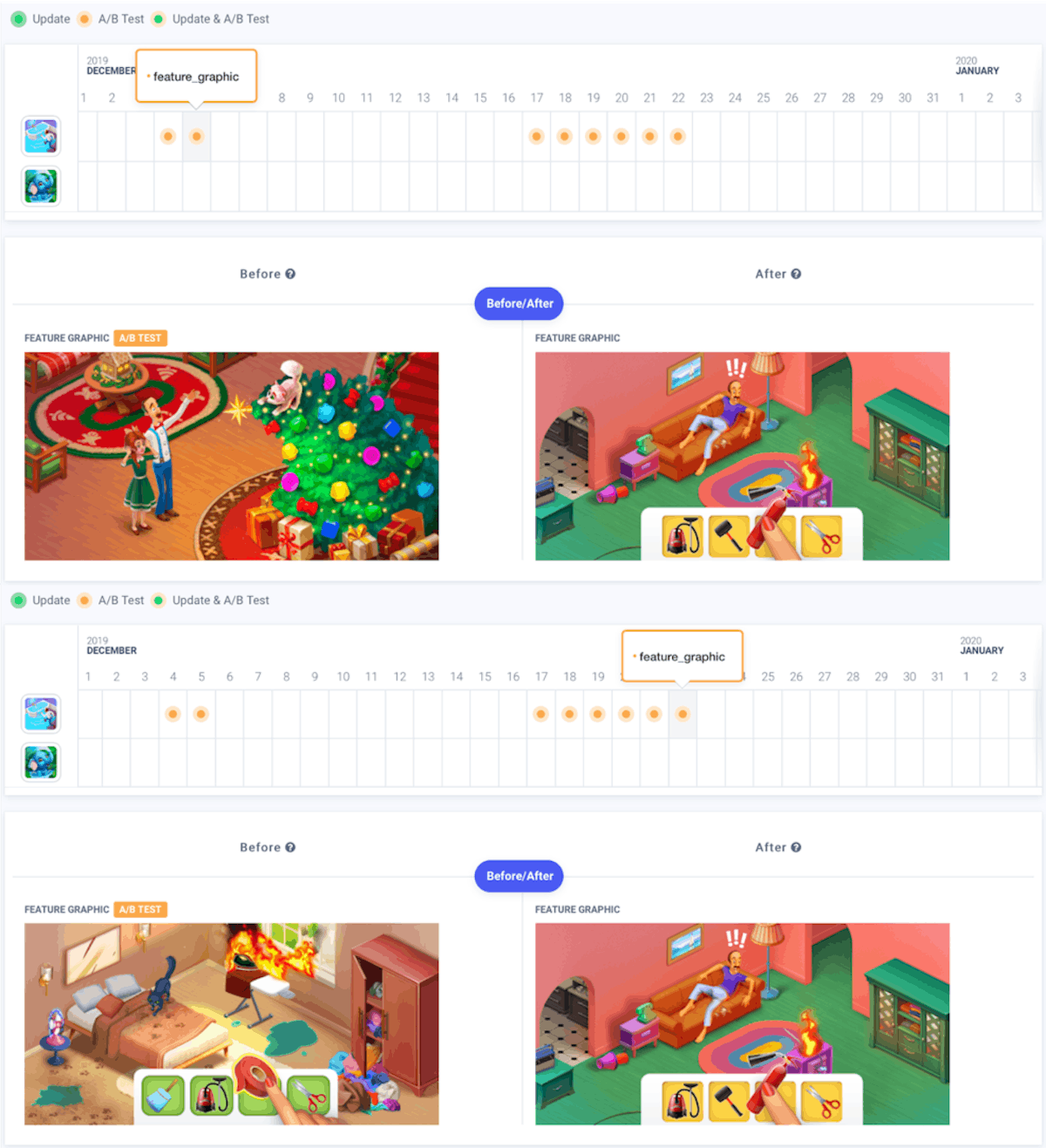

2. Seasonal A/B testing

You can notice that some apps are trying new versions of their metadata during very popular seasons in order to follow the trend and get more downloads. You can check what your competitors A/B tested to get inspired from the versions they decided to keep at the end of the A/B test. It could give you valuable insights on best practices and on how you should invest in your A/B testing strategy.

We looked at the feature graphic changes for the game Gardenscapes in the US during the Christmas season. Gardenscapes tried to test a Christmas feature graphic at the beginning of the month. However, they quickly changed it back, putting a graphic not related to the Christmas theme. By changing their picture, the principle of the game was not clear enough and their brand was not recognizable enough to drive downloads. As a result, on December 22, they stayed with a feature graphic, showcasing better the game without including Christmas elements.

Learn some tips on seasonal updates

3. Get insights into your app’s category

Sometimes, you can learn not only about the frequency of A/B tests but also their final results. Let’s say your app is in the food & drink category and you are not sure if you should put first screenshots of food or the features of your app. The best way is to look at your competitors and see what they’ve done.

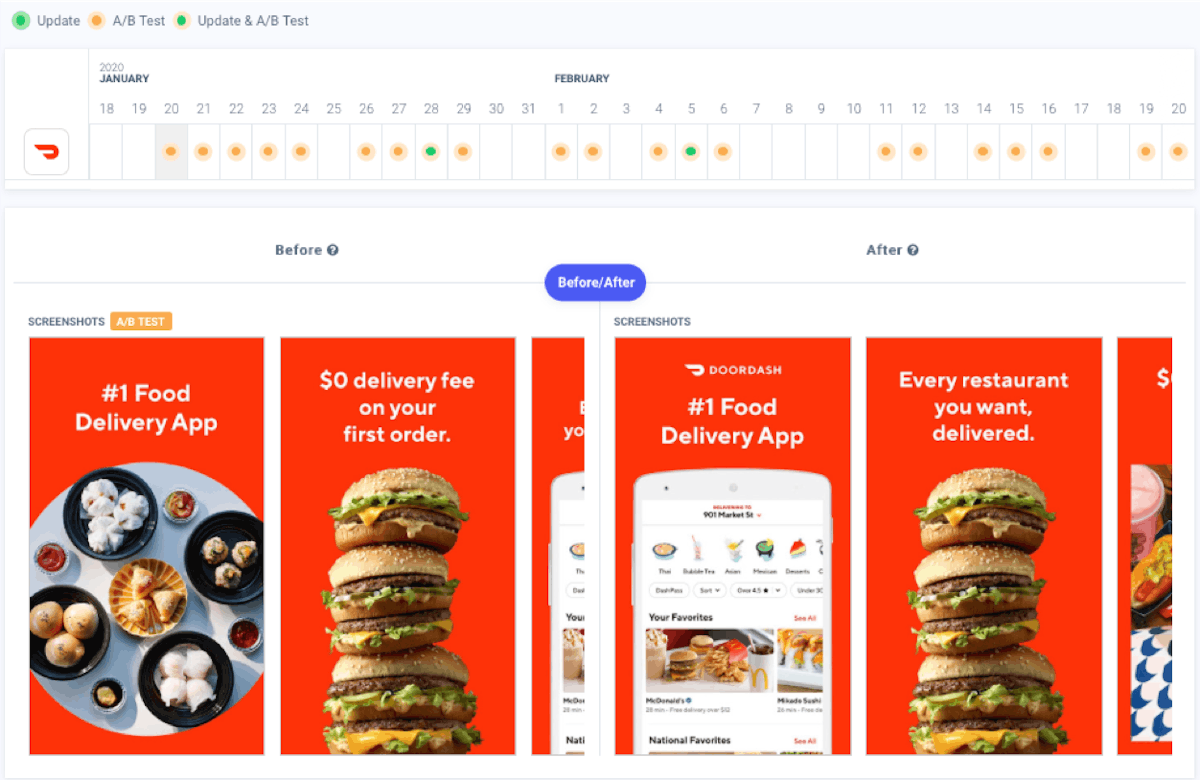

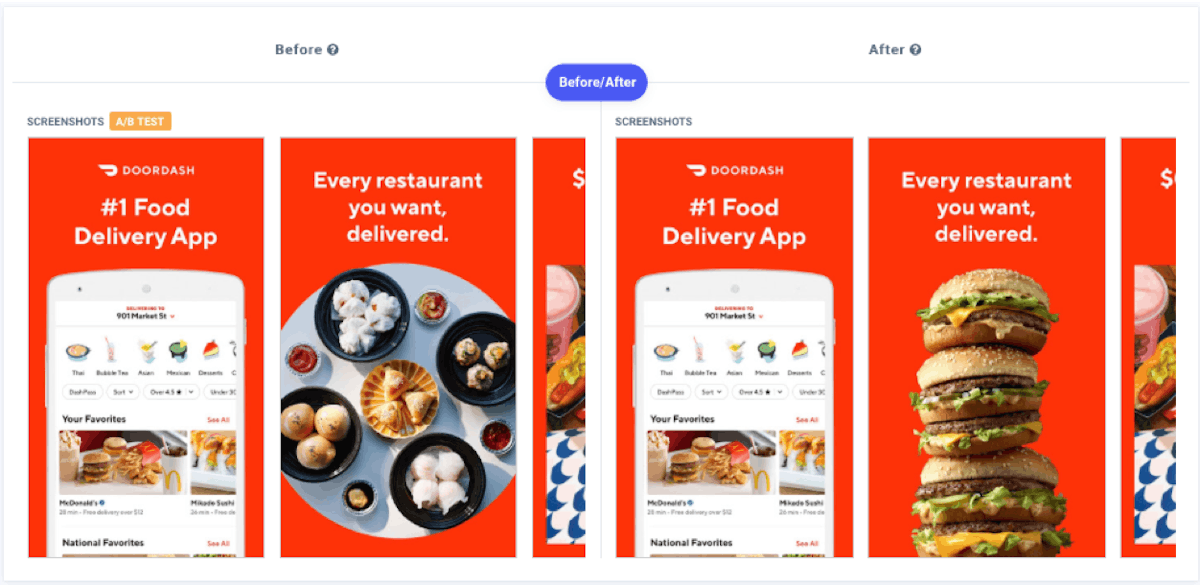

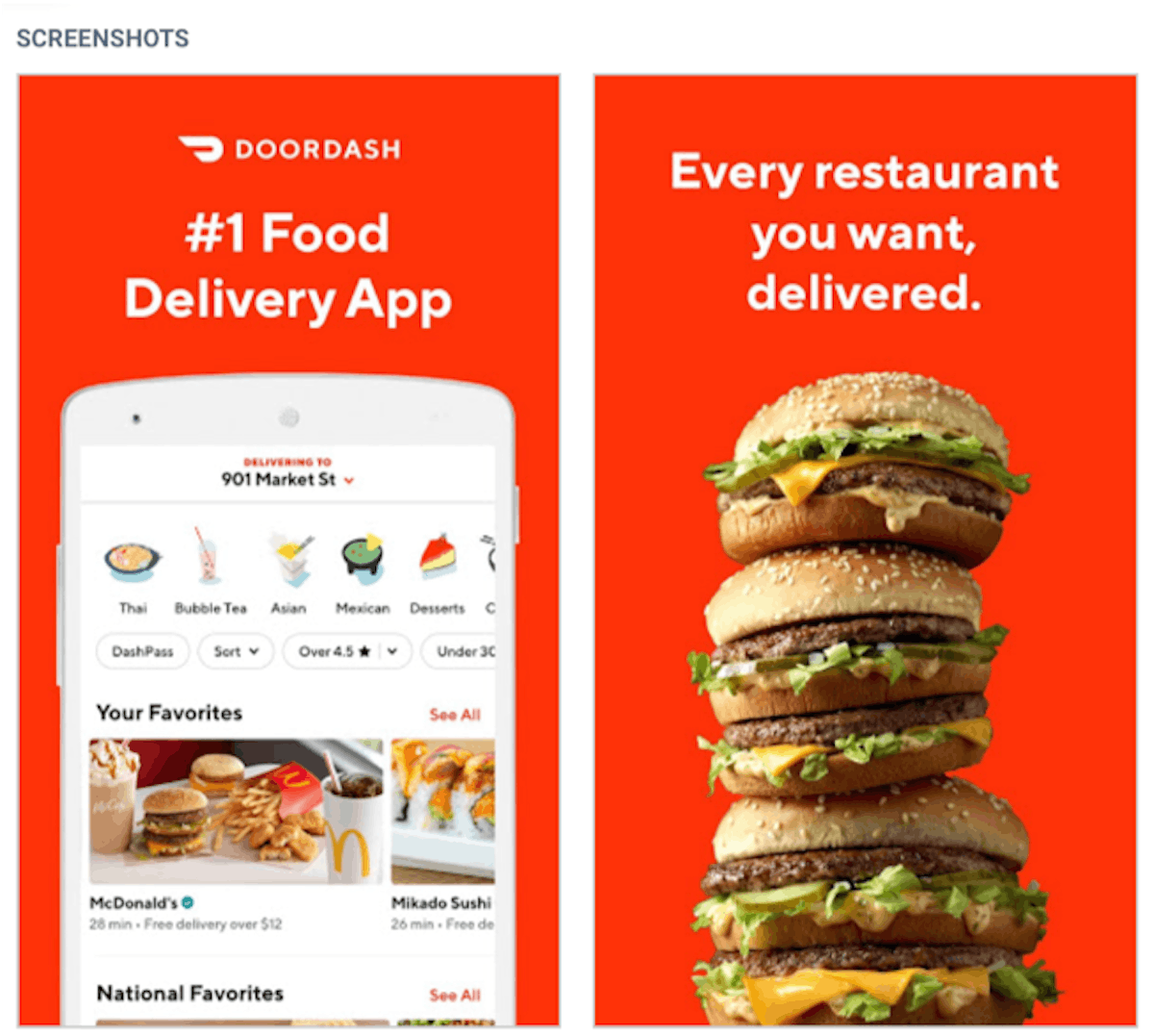

Here, we took the example of DoorDash, a food delivery app in the US. They first started by testing if two screenshots of food would work better than having – the first screenshot shows the app’s functionality, while the second depicts food.

At the end of this experiment, we noticed that they went with the second option. Then they wanted to see which kind of food to show, which led to another A/B test.

Over the course of a month, they switched between three food options (Asian food, tacos, and burgers) and chose the burger screenshot to be placed second in their screenshots.

In the end, they settled on a screenshot displaying their app’s features and another one featuring a stack of burgers.

Start fine-tuning your A/B testing strategy!

To summarize, with AppTweak’s A/B testing tool, you will be able to:

- Understand the overall situation – if your competitors are A/B testing or not

- Know how often and how long an A/B test lasts

- Scrutinize the strategy of each competitor – which metadata is a priority?

- See if there are seasonal A/B tests

- Learn the best practices from the leaders of your category

Sukanya Sur

Sukanya Sur

Micah Motta

Micah Motta

Georgia Shepherd

Georgia Shepherd

Anne Jamet

Anne Jamet